Four Generations of Broken Promises: Why AI SOC Agents Might Actually Be Different

- David O'Neil

- Cybersecurity

- 18 Mar, 2026

Series: The SIEM & AI Reckoning — Article 1 of 10

Over twenty years and hundreds of vendor pitches, one line never changes:

“This is going to change everything.”

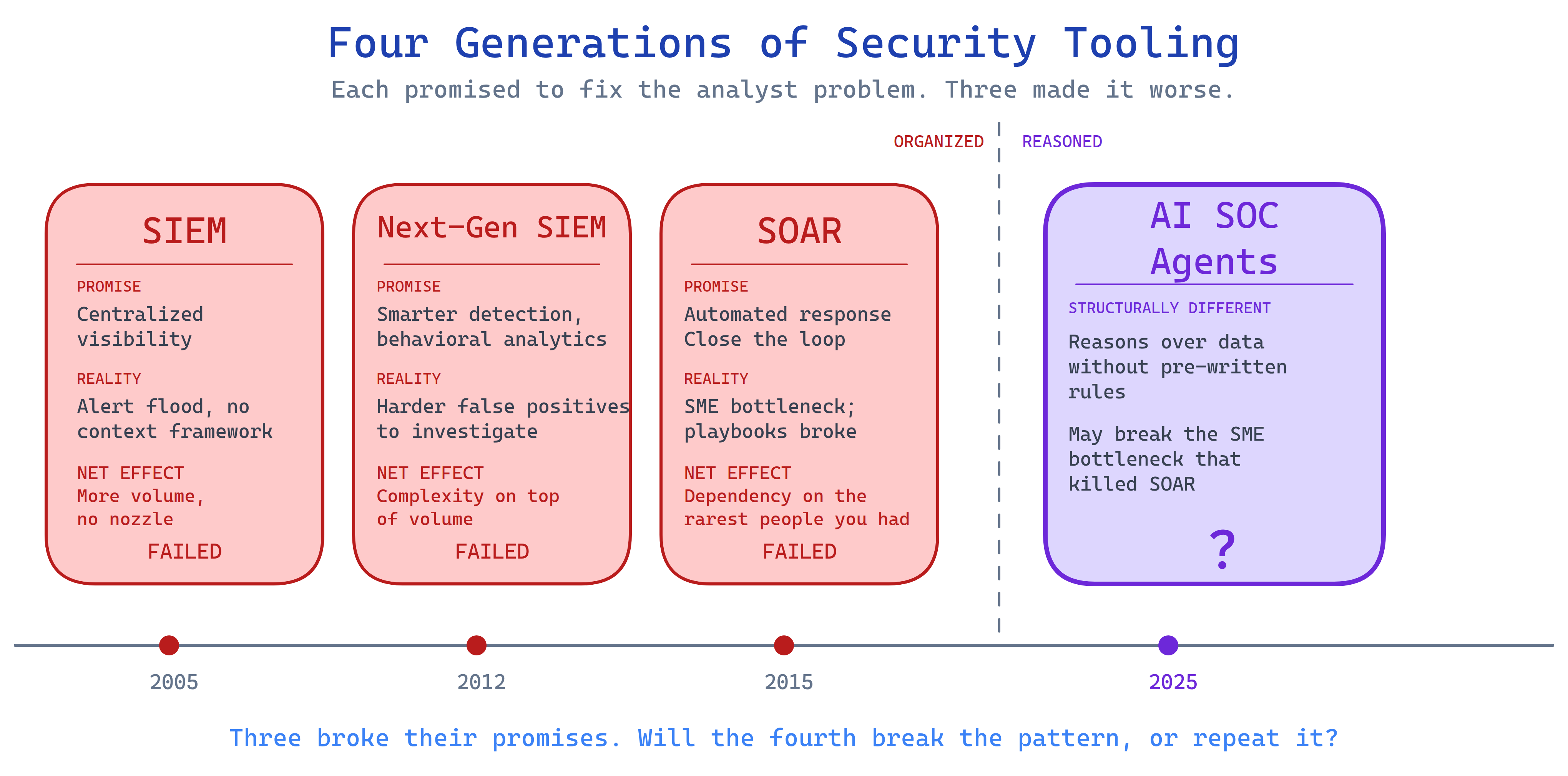

2005, SIEM. 2012, Next-Gen SIEM. 2016, SOAR. And now, 2025–2026, it’s AI SOC Agents. Four generations. Three broke their promises. The fourth is making the same ones.

But here’s what the marketing decks never said. Each of the first three generations made the analyst’s job harder before it made it easier. For many teams, it never got easier at all.

The workforce shortage hit 4.8 million globally by 2025. It’s still growing. Three generations of tools, and we’re further behind than when we started.

AI SOC Agents operate on a different principle than everything that came before them. Not a better way to organize data, but a way to reason over it. The question isn’t whether the fourth generation is technically better. It’s whether it finally breaks the pattern where the cure is as hard to manage as the disease.

Three Generations of Security Tooling, One Pattern

We don’t need a history lesson. We need the pattern.

SIEM (2005): The Fire Hose Problem

Before SIEM, security teams had blind spots but manageable workloads. After SIEM, we had visibility into everything and no framework for deciding what any of it meant.

Alert volume exploded. The fire hose was on, and nobody had a nozzle.

Data without context is noise, and SIEM gave us an ocean of it. Analysts spent more time triaging alerts than investigating threats. We solved the visibility problem and created the overwhelm problem.

Next-Gen SIEM (2012): Context for the Cases We Already Knew About

The industry recognized the problem and responded with behavioral analytics, ML-based anomaly detection, and smarter correlation. Give analysts context, not just data.

The catch: context only for behaviors someone had already anticipated. Novel attack paths, edge cases, and attacker creativity remained invisible. And the alerts that were generated required more analyst judgment to validate, not less. A signature hit is binary. A behavioral anomaly requires interpretation.

We added complexity on top of volume. Better tools for swimming, in a deeper ocean.

SOAR (2015): Automating the Wrong Layer

By this point, analyst burnout was undeniable. SOAR’s answer: automate the response. Close the loop. Let the playbook handle the obvious stuff so analysts can focus on what matters.

This is where it really went wrong, and it’s worth being specific about why.

SOAR required a rare, expensive hybrid. Someone who deeply understood the security use case and could translate it into automation logic. Not a developer; they didn’t know the threat context. Not a tier-1 analyst; they couldn’t build the playbooks. You needed a security engineer with automation skills, and those people were already the most constrained resource in the SOC.

SOAR created a bottleneck at exactly the point where you could least afford one. The people who could have built the playbooks were too busy fighting fires to build them. When playbooks were built, they broke on edge cases, which required the same rare people to fix them.

Gartner retired the SOAR Magic Quadrant. That’s about as polite as analysts get when something hasn’t worked.

| Generation | The Promise | What Actually Happened | Net Effect on Analysts |

|---|---|---|---|

| SIEM (2005) | Centralized visibility | Alert flood, no context framework | More volume, no nozzle |

| Next-Gen SIEM (2012) | Smarter detection | Context only for anticipated scenarios | Complexity on top of volume |

| SOAR (2015) | Automated response | SME bottleneck; playbooks broke on edge cases | Dependency on the rarest people you had |

Three generations. Each one added burden before, or instead of, removing it. Do AI SOC Agents finally break this pattern?

The Fourth Generation: Why AI SOC Agents Are Structurally Different

Here’s what I’m seeing right now as a CISO evaluating these tools.

Gartner placed “AI SOC agents” at the peak of inflated expectations on their 2025 Hype Cycle. Only 9% of security professionals report being “very confident” in AI-generated alerts (Gurucul, 2025). The SANS 2025 SOC Survey ranked AI/ML tools dead last in satisfaction across all technology categories, despite being adopted by roughly 40% of SOCs.

We have every reason to be skeptical.

Two things are structurally different about AI SOC Agents:

AI may break the SME bottleneck. Natural language prompting lowers the floor for who can build and maintain automation logic. A tier-1 analyst can describe a workflow in plain English and get something useful back. That was never possible with SOAR playbooks. The dependency on a rare security-engineer-plus-automation-skills hybrid may no longer be the rate limiter.

AI reasons over unstructured data without pre-written rules. Instead of “this IP triggered this signature,” you get “this sequence of behaviors across three systems, in the context of this user’s role and this week’s threat landscape, looks like lateral movement because…” This directly addresses the context gap that SIEM and Next-Gen SIEM couldn’t close.

The data backs this up. Microsoft Copilot for Security produced measurable MTTR reductions across 378 organizations: 25.9% faster investigation, 30% reduction in mean time to respond. Palo Alto’s XSIAM crossed $1B in cumulative bookings with documented ROI.

But we have to say this out loud: AI is making attackers better at the same rate. Generative AI has lowered the barrier to automated phishing, reconnaissance, and exploit development. If we adopt AI defensively without a strategy, we’re running faster toward the wrong mountain. Speed doesn’t help when you haven’t aligned on where you’re going.

There’s also a subtler challenge that doesn’t get enough attention. AI’s reasoning quality depends entirely on the context it receives. Feed it clean, enriched, well-structured security data and it performs. Feed it fragmented logs with inconsistent schemas and missing fields, and it produces confident analysis built on a broken foundation.

The context problem that plagued every previous generation doesn’t disappear with AI. It moves upstream. Your data architecture becomes the bottleneck instead of your analyst headcount.

Four Lessons from Twenty Years of Security Tool Failures

If we’re willing to use them:

Visibility without context is noise. Every generation gave us more data. AI is not exempt. It can hallucinate context that doesn’t exist, which may be worse than no context at all.

Automation without judgment creates gaps. SOAR playbooks broke on edge cases. Agentic AI will break in different, less predictable ways. Human oversight isn’t bureaucratic friction. It’s the mechanism that catches where the tool fails.

Tools expose missing expertise, not replace it. Every generation promised to reduce the analyst burden. Every generation required more skilled people to manage the tools. AI might change this, but only if we deploy it where it solves a real problem, not where it demos well. We still need people who know our data and our threat landscape. AI amplifies that knowledge. It doesn’t create it.

Tool adoption isn’t strategy. It’s a purchase order. Having SOAR didn’t mean using SOAR effectively. Organizations that deploy AI without targeting specific failure modes will repeat the early SIEM experience: an expensive system producing impressive outputs that nobody acts on.

Align on the Mountain Before You Start Climbing

Three generations have played out. We’re living through the beginning of the fourth. If there’s one thing I’d tell a fellow CISO evaluating AI for security operations, it’s this: the technology is finally capable enough. The risk is in the implementation.

Pick the mountain first. What specific problem are we solving? Alert fatigue at tier-1? Slow enrichment during incident response? Inconsistent investigation quality across analysts? Pick one. Measure it before you start. Measure it after. If your team can’t name the failure mode AI is supposed to fix, you’re not ready for the vendor conversation yet.

Keep humans in the loop longer than feels necessary. Vendors will say their system is ready for autonomous action. Maybe it is. But the asymmetry matters. An analyst catching an AI false positive costs minutes. AI taking autonomous action on a false positive can cost significantly more. Trust needs to be earned, not assumed. Even if the first step is just observing what the AI recommends without acting on it, that’s the right starting point.

Invest in your data before you invest in AI. This is the step most teams want to skip, and it’s the one that matters most. Every AI system is downstream of data quality. Duplicate logs, misconfigured asset data, inconsistent source formatting: that’s what you’re feeding the model. The boring infrastructure work is the prerequisite, not the afterthought. (More on this in an upcoming article in this series.)

Treat this as a program, not a deployment. SIEM took years to mature. SOAR took years. AI will too. The organizations that succeed won’t be the ones that bought the most advanced platform on day one. They’ll be the ones that started deliberately, measured rigorously, and built toward maturity with documented outcomes as the goal.

| Maturity Level | What It Looks Like | Timeline |

|---|---|---|

| Exploring | Evaluating vendors, running pilots on low-risk use cases | Months 1–3 |

| Targeting | AI deployed against 1–2 specific failure modes, measured against baselines | Months 3–9 |

| Integrating | AI woven into daily SOC workflows, human oversight at defined thresholds | Months 9–18 |

| Optimizing | Expanding autonomy based on earned trust and documented outcomes | Year 2+ |

Where I Land

Three generations of vendor promises. Each one better than the last. Each one harder to work with than what came before it, at least in the early years.

Now we’re in the fourth. AI SOC Agents address the two structural failures the previous generations couldn’t crack: the context gap that SIEM left open, and the SME bottleneck that strangled SOAR. For the first time, the technology operates on a principle that could actually reduce the burden on analysts instead of adding to it.

Could. Not will. That depends on us.

Align on the right mountain. Start with specific problems. Invest in the data. Keep humans accountable. Do those four things, and this generation of tools earns a different ending than the last three.

We’ve been given another shot. Let’s not waste it the same way.

Your next step: Before your next AI vendor meeting, write down three specific problems you want AI to solve. Not “improve our SOC.” Failure modes with measurable baselines. If you can’t name them, the meeting can wait.

Next in this series: The SIEM Cost Trap — Why Your Data Lake + AI Agents Will Win

David O’Neil is a CISO and builder focused on the practical realities of security operations, AI adoption, and building programs that actually work. Find him on Twitter/X.